AI Breaks Different Than Software and Apps. How Will You Know?

I remember back in the early Windows days when the blue screen of death was an everyday occurrence. Autosave was a feature of the future so we quickly learned to save our work often. Fortunately, those Microsoft training wheels days are behind us.

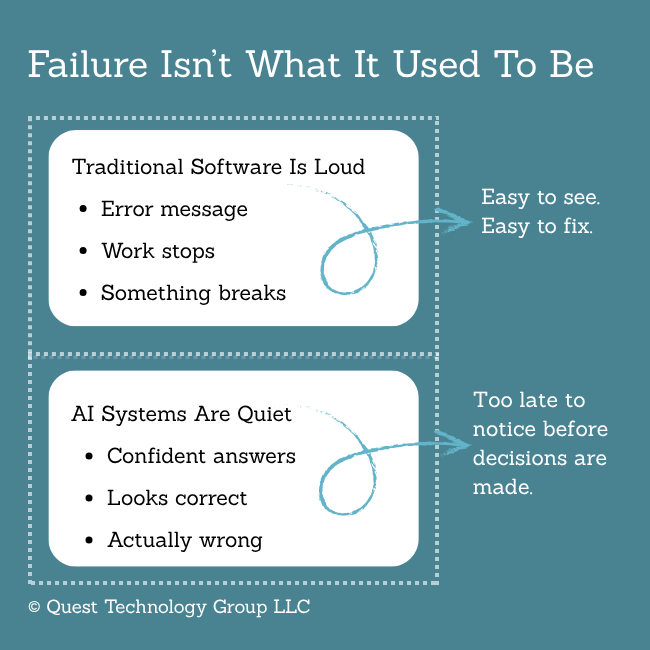

Software is still imperfect and failures happen. We get an error message. There is a usually a resolution, and we move on. Meanwhile, the software provider is continually fixing known bugs and developing enhancements. AI-driven applications are different.

AI Failures Happen

In this post, we talked about why buying AI-driven applications are different than traditional software. Let’s look at something more practical — how AI systems fail. Why does this matter? Because these silent failures are where most of the risk lies.

. . . .

Difference 1: Our familiar "it works" isn't the same as "it's trustworthy".

Traditional software either works or it doesn’t, and its failures are immediately obvious.

AI-driven apps and services, on the other hand, silently fail in reliability and accuracy. They can be mostly right, sometimes wrong, and unpredictable all at the same time. There are no loud error messages so we continue working. Every AI system produces unseen errors because they’re built on a model of continuous learning instead of repeatable programmable steps.

Why this matters: We’re not measuring performance with AI applications. We’re evaluating how consistent and accurate the results are every time.

I was having a conversation with ChatGPT last week, and the responses were just okay. It missed the nuances that were important to the business leader audience we were talking about. The responses were a lot of word puffery without any useful direction.

ChatGPT finally acknowledged that I had bumped up against one of AI’s limitations. “AI is better at identifying patterns than understanding human nuance and context.” And there it is.

One question to ask: Where does this application tend to produce inconsistent results most often?

Listen for: The vendor should continually measure results and know what conditions produce them. Can they clearly explain where their system drifts. If so, then they’re doing their work. If not, it’s best to reconsider.

Our judgment determines when these inconsistencies are acceptable, or not, for our specific purpose.

. . . .

Difference 2: AI doesn't announce its failures.

One of the biggest complaints about AI tools is that they are so cheerfully confident even when they’re wrong. For people looking for encouragement and confirmation, this is probably a boost to their day. Responsible people are more likely to be irritated.

I recently changed my ChatGPT settings to overcome some of this. It doesn’t solve the fundamental problem of inaccurate responses, but it does reduce some of the eager puppy stuff.

The problem is knowing when confident responses are accurate. There is no warning. No software patch. No help desk to walk us through a fix. Quiet failures are an inherent part of how these AI models work. By the time we’ve discovered that misguided word, phrase or insight, a decision might have been made.

Why this matters: We can’t rely on the old traditional software failure signals. Our careful attention and judgment are how we measure the importance of failure for each given situation.

One question you can ask: How will we know if this system is producing degraded or inconsistent results?

Listen for: How does the vendor monitor and measure accuracy? Is there a repeatable process handled by humans? Most importantly, does the vendor acknowledge that failure is baked into the model?

. . . .

Difference 3: The product will continually changes, and you won't know it.

We’re all accustomed to the software update process so it’s natural to assume that AI-driven products mature the same way. Not so.

Because AI products are based on what they are taught to do, they are continually changing. Large language models are regularly consuming content and data that influence their behavior. If you ask an AI tool the same question, you might not get the same response each time.

Remember, patterns are discovered and constructed into responses in real time. There are no stored answers.

Why this matters: AI products behave differently even when there are no significant model changes. If you use Claude or ChatGPT, you know that the models are being updated often with new model numbers. Between these model releases, the underlying data we rely on is regularly changing.

This isn’t some kind of sneaky tactic AI providers have devised to keep us all confused. It’s simply the way this technology works.

One question you can ask: How do you track and notify customers when the system and behaviors change?

Listen for: Do they explain in simple words how AI tools consume data and create responses?

The Bottom Line

AI tools have created the opportunity for us to slow down and explore the information we’re being handed. It’s easy to fall into the trap of cheerful confidence. Knowing that confident ≠ correct gives us permission to be more critical thinkers. That's a good thing.

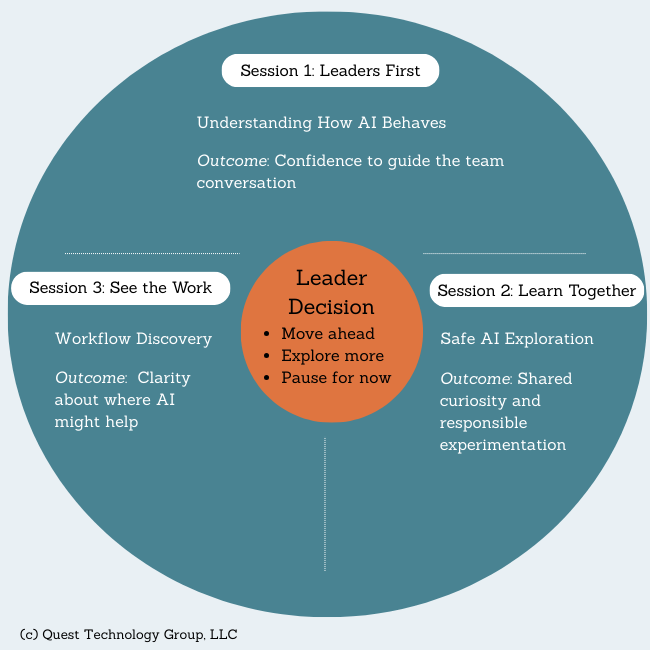

Where Leaders Start with AI

AI isn't the hard part. Starting in the right place is.

Answer the question many leader are quietly asking themselves: "How do I even begin without screwing it up?"

Explore AI Clarity Learning Circle

Linda Rolf is a lifelong curious learner who believes a knowledge-first approach builds valuable, lasting client relationships.

Linda Rolf is a lifelong curious learner who believes a knowledge-first approach builds valuable, lasting client relationships. She loves discovering the unexpected connections among technology, data, information, people and process. For more than four decades, Linda and Quest Technology Group have been their clients' trusted advisor and strategic partner.

Tags: AI